AI

Faster and Smarter: How Appnovation uses AI to Drive Client Results

26 November, 2025|5 min Read

Speed and efficiency matter more than ever in today’s digital world - but not at the expense of accuracy, trust, or accountability. At Appnovation, we help clients keep pace with innovation by using…

Clear all filters

589 insights to be viewed

AI

11 March, 2026|5 min

4 Things Your Content Is Missing for AI Search

Engineering & Platform Development

02 March, 2026|6 min

How to Migrate Content at Scale Without Losing Rankings

AI

11 February, 2026|8 min

Today’s Email Marketing: AI & Hyperpersonalization - What Digital Leaders Need to Do Next

Creative & Experience Design

26 January, 2026|5 min

The Missing Layer in CX Strategy: Experience Design That Evolves with the User

Strategy & Insights

15 January, 2026|6 min

Digital Delivery That Actually Works in 2026

AI

13 January, 2026|4 min

The Synthetic Employee: Why Your Atlassian AI Needs an Org Chart, Not Just a License

AI

08 December, 2025|5 min

What Is GEO and Why It Matters Now

AI

26 November, 2025|5 min

Faster and Smarter: How Appnovation uses AI to Drive Client Results

Creative & Experience Design

04 November, 2025|4 min

Why Marketers Can’t Ignore AI-Powered UX Design in the Age of Hyper-Personalization

Strategy & Insights

07 October, 2025|3 min

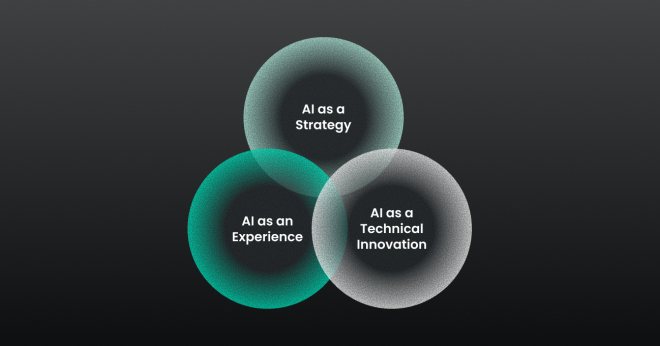

Unlocking Untapped Potential: Entering the World of AI & Data

Data & Analytics

01 October, 2025|3 min

Appno in Action: Transforming Data into a Revenue-Generating Platform for Financial Services

Strategy & Insights

24 September, 2025|3 min